Self-flying drone dips, darts and dives autonomously through trees at 30 mph.

Andrew Barry from MIT’s Computer Science and Artificial Intelligence Lab (CSAIL), created this self-flying drone that autonomously avoids trees, as part of his PhD thesis.

Andrew Barry, said:

“Everyone is building drones these days, but nobody knows how to get them to stop running into things. Sensors like LIDAR are too heavy to put on small aircraft, and creating maps of the environment in advance isn’t practical. If we want drones that can fly quickly and navigate in the real world, we need better, faster algorithms.”

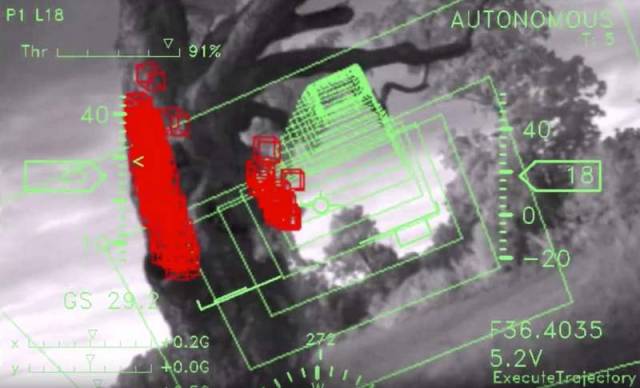

Running 20 times faster than existing software, Barry’s stereo-vision algorithm allows the drone to detect objects and build a full map of its surroundings in real-time. Operating at 120 frames per second, the software – which is open-source and available online – extracts depth information at a speed of 8.3 milliseconds per frame.

The drone, which weighs just over a pound and has a 34-inch wingspan, was made from off-the-shelf components costing about $1,700, including a camera on each wing and two processors no fancier than the ones you’d find on a cellphone.

How it works

Traditional algorithms focused on this problem would use the images captured by each camera, and search through the depth-field at multiple distances – 1 meter, 2 meters, 3 meters, and so on – to determine if an object is in the drone’s path.

Such approaches, however, are computationally intensive, meaning that the drone cannot fly any faster than 5 or 6 miles per hour without specialized processing hardware.

source csail.mit

Leave A Comment